Every time you send a message to ChatGPT, Claude, or Gemini through their web interfaces, your text travels to a cloud server. It gets processed, logged, and in many cases stored for model training and safety review. For casual questions, that might be fine. But what about private conversations? Medical questions? Business strategy? Legal matters?

The demand for a local AI chatbot with real privacy is growing fast. People want the power of AI without sacrificing control over their data. This article explains why local-first AI matters, how OpenClaw Easy achieves it, and how it compares to cloud-only solutions.

The Privacy Problem with Cloud AI Chatbots

When you use a cloud-based AI chatbot, here is what typically happens to your data:

- Your message is transmitted over the internet to the AI provider's servers.

- The message is processed on the provider's infrastructure, often in a data center in another country.

- Your message may be logged for safety monitoring, abuse detection, and quality improvement.

- Your message may be used for training future AI models (depending on the provider's terms of service).

- The response travels back over the internet to your device.

Even providers that promise not to train on your data still receive and process it on their servers. That means your data exists, at least temporarily, on infrastructure you do not control.

For many users, this is perfectly acceptable. But for others -- healthcare professionals, lawyers, financial advisors, journalists, activists, or anyone dealing with sensitive information -- it is a serious concern.

What Does "Local-First" Actually Mean?

A local-first AI chatbot processes as much as possible on your own computer rather than sending data to the cloud. In the context of OpenClaw Easy, "local-first" means three things:

1. The Application Runs Locally

OpenClaw Easy is a desktop application that runs entirely on your macOS or Windows machine. It is not a web app. There is no browser session connecting to a remote server. The app, its configuration, your conversation history, and your API keys all live on your hard drive.

2. No Intermediate Cloud Server

When you connect WhatsApp, Telegram, or any other messaging channel, OpenClaw Easy handles the connection directly from your machine. Your messages are not routed through a third-party server. The data path is: messaging platform → your computer → AI provider (or local model) → your computer → messaging platform.

3. Optional Fully Offline AI

With Ollama integration, you can run the AI model itself on your machine. In this configuration, not even the AI inference touches the cloud. Your prompts and responses stay entirely on your hardware. The only external connection is the messaging platform itself (WhatsApp, Telegram, etc.).

With OpenClaw Easy + Ollama, your data path is: messaging platform → your computer. That is it. Nothing else.

How OpenClaw Easy Protects Your Privacy

Let us get specific about what OpenClaw Easy does (and does not do) with your data:

What Stays on Your Machine

- API keys -- your Claude, OpenAI, Gemini, or other API keys are stored locally in the app's config directory. They are never sent to OpenClaw servers.

- Conversation history -- all messages, both incoming and AI-generated responses, are stored locally on your machine.

- Configuration -- system prompts, agent settings, cron jobs, channel connections -- everything lives on your disk.

- Bot tokens -- your Telegram bot token, Discord bot token, and other channel credentials remain local.

What Leaves Your Machine

- Messages to/from the messaging platform -- when someone sends a WhatsApp message, that message travels through WhatsApp's infrastructure to reach your computer. This is inherent to how WhatsApp (and Telegram, Discord, etc.) work. OpenClaw Easy does not add any additional intermediary.

- Prompts to the AI provider -- if you use a cloud-based AI provider (Claude, ChatGPT, Gemini), your prompts are sent to that provider's API. This is the same data path as using those providers directly through their own apps.

- Nothing else -- OpenClaw Easy does not phone home, does not send telemetry, and does not upload your data to any server.

Key point: If you use Ollama (local model), even the AI prompts stay on your machine. The only external communication is the messaging platform connection itself.

OpenClaw Easy vs. Cloud Chatbot Platforms

How does a local-first approach compare to cloud-based chatbot platforms? Here is a side-by-side look:

Cloud Chatbot Platforms

(BotPress, Landbot, ManyChat, Chatfuel)

- Your data is processed on their servers

- Conversations are stored in their cloud database

- API keys may be stored on their infrastructure

- Monthly subscription fees ($50-$500+/month)

- Limited control over data retention

- Vendor lock-in -- migrating is difficult

- Subject to the vendor's privacy policy

- Internet connection always required

OpenClaw Easy (Local-First)

(Desktop app, runs on your machine)

- Data processed on your own computer

- Conversations stored on your hard drive

- API keys never leave your machine

- Free with your own API keys

- Full control over data -- delete anytime

- No vendor lock-in -- your data, your machine

- You are the privacy policy

- Offline AI possible with Ollama

Use Cases Where Privacy Matters Most

Local-first AI is not just a nice-to-have for some use cases -- it is essential:

Healthcare

Medical professionals who want to use AI to help with patient questions, symptom analysis, or treatment research need to ensure patient data stays confidential. HIPAA and similar regulations in other countries impose strict requirements on how health data is handled. A local AI chatbot keeps patient-related queries off cloud servers entirely.

Legal

Lawyers and legal professionals deal with privileged communications. Using a cloud-based AI to draft legal documents, analyze contracts, or research case law creates a risk of confidential data being stored or accessed by third parties. Running AI locally eliminates that risk.

Finance

Financial advisors, accountants, and investment professionals handle sensitive financial data. Client portfolio details, tax information, and financial strategies should not be processed by third-party cloud services. A local AI chatbot keeps financial conversations private.

Journalism and Activism

Journalists protecting sources and activists communicating in sensitive political environments need strong privacy guarantees. Cloud-based AI services can be subpoenaed, and data stored on third-party servers is always a potential liability. Local-first AI reduces the attack surface dramatically.

Business Strategy

Companies discussing competitive strategy, product roadmaps, M&A plans, or internal reorganizations should think twice about feeding that information into cloud AI services. A local AI chatbot means your strategic conversations stay within your organization.

The Trade-Offs of Local AI

Local-first AI is not without trade-offs. It is important to understand what you gain and what you give up:

What You Gain

- Data sovereignty -- your data never leaves your machine (with local models).

- No ongoing costs -- local models are free to run after the initial download.

- No rate limits -- run as many queries as your hardware allows.

- Offline capability -- AI works without internet (though messaging platforms still need connectivity).

- Regulatory compliance -- easier to meet data residency and handling requirements.

What You Give Up

- Model quality -- the best cloud models (ChatGPT, Claude Opus) still outperform local models for complex reasoning tasks. The gap is closing rapidly, but it exists today.

- Speed -- cloud APIs respond in under a second. Local models on consumer hardware take 2-10 seconds depending on model size and hardware.

- Convenience -- cloud AI requires no setup beyond an API key. Local models require Ollama installation and model downloads.

- Hardware requirements -- running a 7B model smoothly requires at least 8 GB of RAM. Larger models need 16-48+ GB.

The Best of Both Worlds

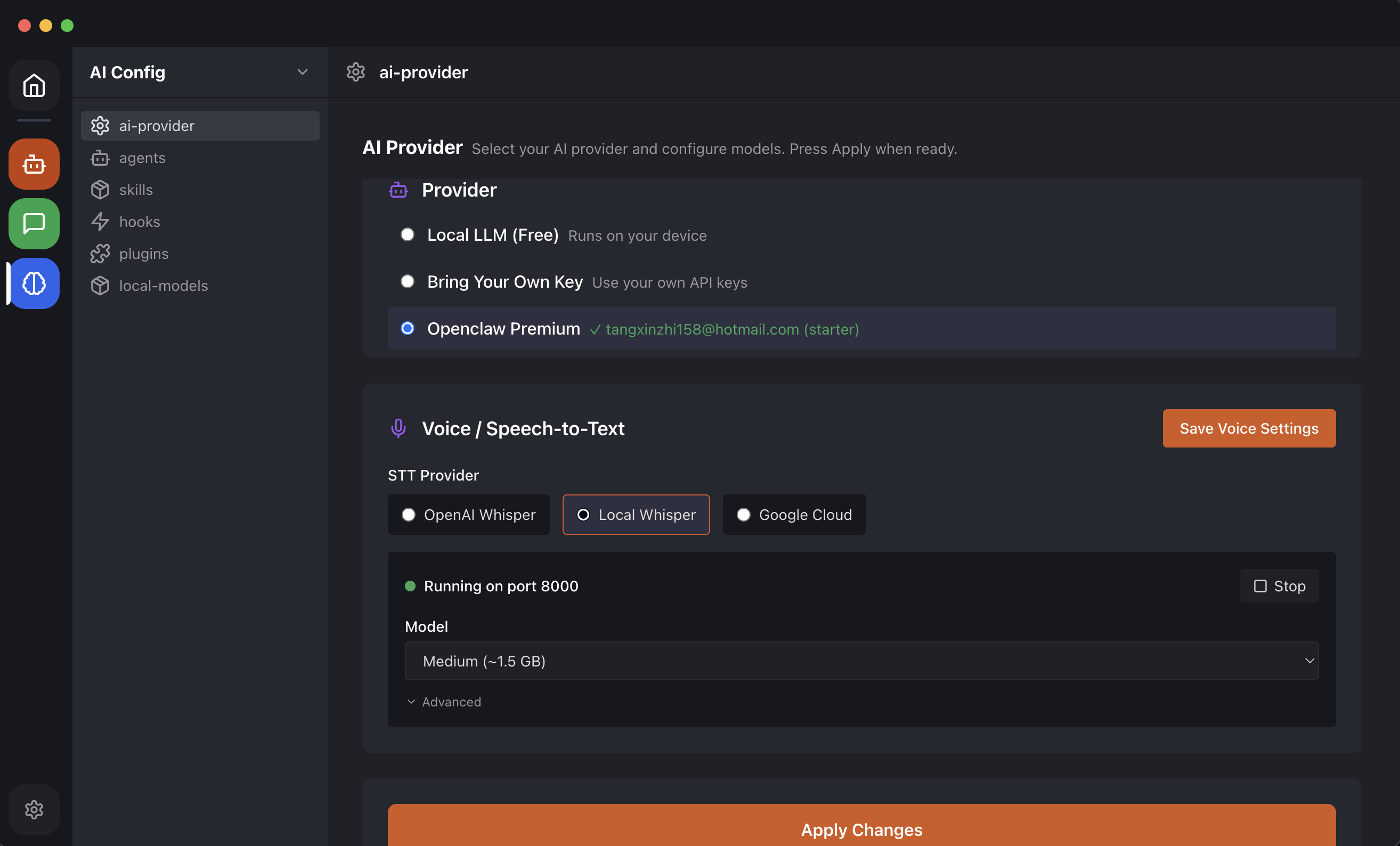

Here is the good news: OpenClaw Easy does not force you to choose. You can use both cloud and local AI, switching between them as needed:

- Use Claude or ChatGPT for high-quality responses when privacy is not a concern.

- Switch to a local Ollama model when handling sensitive conversations.

- Change models at any time without reconnecting your messaging channels.

This hybrid approach gives you the best response quality when you need it and full privacy when it matters. And because OpenClaw Easy itself runs locally regardless of which AI provider you use, your configuration, conversation history, and channel credentials always stay on your machine.

How to Get Started with a Private AI Chatbot

Setting up a privacy-first AI chatbot with OpenClaw Easy takes just a few minutes:

- Download OpenClaw Easy from openclaw-easy.com -- free for macOS and Windows.

- For maximum privacy, install Ollama and pull a model like Llama 3.2 or Qwen 2.5. See our step-by-step Ollama tutorial.

- Connect your messaging channel -- WhatsApp, Telegram, Discord, or Slack.

- Start chatting -- your AI is live, and your data stays on your machine.

The Future of Local-First AI

The trend is clear: local AI is getting better, faster, and more accessible every month. Open-source models are rapidly closing the gap with proprietary cloud models. Hardware is getting more powerful -- Apple Silicon Macs can now run 7B models with impressive speed, and NVIDIA consumer GPUs make local inference practical on Windows.

We believe the future of AI chatbots is local-first. Not because cloud AI will disappear, but because users deserve the choice. You should be able to decide where your data goes, which model processes it, and who has access to your conversations.

OpenClaw Easy is built around that principle. Your computer. Your data. Your choice.

Download OpenClaw Easy for free and experience a privacy-first AI chatbot today.

What is Next?

Explore more ways to use your privacy-first AI setup:

- Try the desktop AI app -- compare the best free AI desktop apps in 2026.

- Add AI to Slack -- find the best AI chatbot for Slack for your team.

- Schedule AI tasks -- set up cron jobs for automated AI messages.

- Connect WhatsApp -- add AI to WhatsApp in under 5 minutes.