What's new in May 2026

OpenClaw Easy 2026.5 ships with native Ollama auto-discovery — once Ollama is running, the AI Provider picker lists every installed model (Llama 3.2, Qwen 2.5, DeepSeek R1, Mistral) without any config. The screenshots below now reflect the new picker UI. Also added: a section on running DeepSeek R1 distilled 7B, which became the new sweet spot on Apple Silicon Macs after the January release.

Cloud-based AI models like ChatGPT and Claude are powerful, but they come with a trade-off: your messages leave your machine and travel to a third-party server. For many users, that is a deal-breaker. What if you could run a local LLM on WhatsApp where nothing ever leaves your computer?

With Ollama and OpenClaw Easy, you can. In this tutorial, you will install a local AI model on your machine, connect it to WhatsApp, and have a fully private AI chatbot running in about 15 minutes. No API keys. No cloud dependency. No data leaks.

What You Will Need

- A computer running macOS or Windows with at least 8 GB of RAM (16 GB or more recommended for larger models).

- Ollama -- a free, open-source tool for running local LLMs. Available at ollama.com.

- OpenClaw Easy -- the free desktop app that connects your local model to WhatsApp.

- WhatsApp on your phone.

- 15 minutes -- most of that is downloading the model.

Why Run a Local LLM on WhatsApp?

There are several compelling reasons to run a local model instead of a cloud API:

- Complete privacy -- your messages, prompts, and AI responses never leave your machine. Not even to an API provider.

- Zero cost -- no API fees, no token charges, no subscription. Once the model is downloaded, it runs for free.

- Offline capable -- your AI works even without an internet connection (WhatsApp still needs internet, but the AI inference is local).

- Data sovereignty -- for businesses, healthcare, legal, and other sensitive domains, keeping data local is not optional -- it is a requirement.

- No rate limits -- cloud APIs have rate limits and usage caps. A local model has no such restrictions.

Step-by-Step: Connect a Local LLM to WhatsApp

1 Install Ollama

Ollama is the easiest way to run open-source LLMs locally. Download it from ollama.com.

- macOS -- download the .dmg and drag to Applications.

- Windows -- download the .exe installer and run it.

After installation, Ollama runs as a background service on your machine. It serves models through a local API at http://localhost:11434.

2 Pull a Model

Open a terminal (Terminal on macOS, Command Prompt or PowerShell on Windows) and download a model. Here are some popular choices:

Or for other models:

The download size varies by model. Llama 3.2 (8B) is about 4.7 GB. Smaller models like Phi-3 are around 2.3 GB. Larger models like Qwen 2.5 (72B) are 40+ GB and require significant hardware.

Tip: Start with llama3.2 or qwen2.5:7b for a good balance of quality and speed. You can always try larger models later.

3 Verify Ollama Is Running

Once the model is downloaded, verify that Ollama is serving it correctly:

You should see your downloaded model in the list. You can also test it directly:

If you get an AI response, Ollama is working and ready to connect to OpenClaw Easy.

4 Download and Open OpenClaw Easy

Head to the OpenClaw Easy download page and install the app for macOS or Windows. Open it once installed.

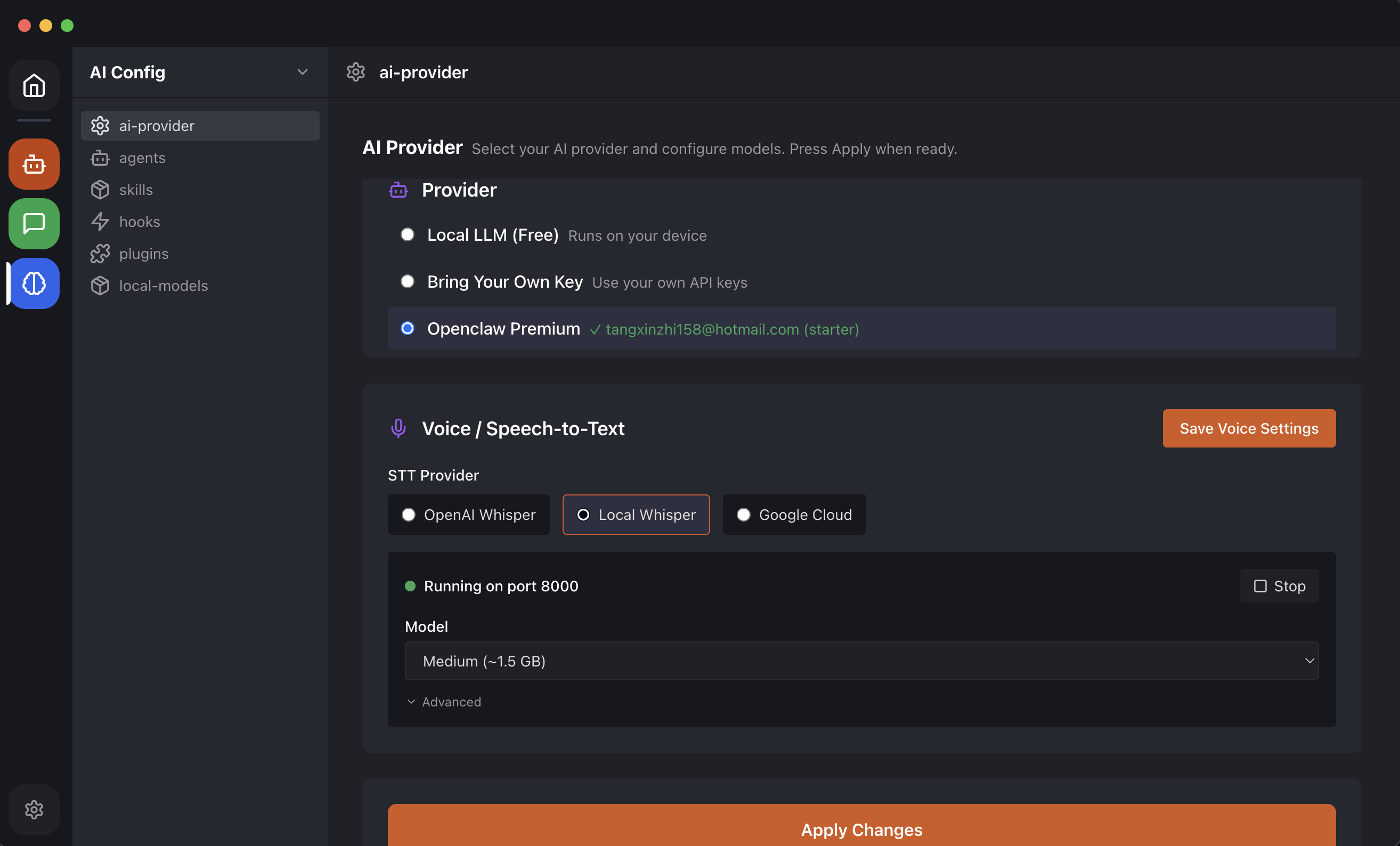

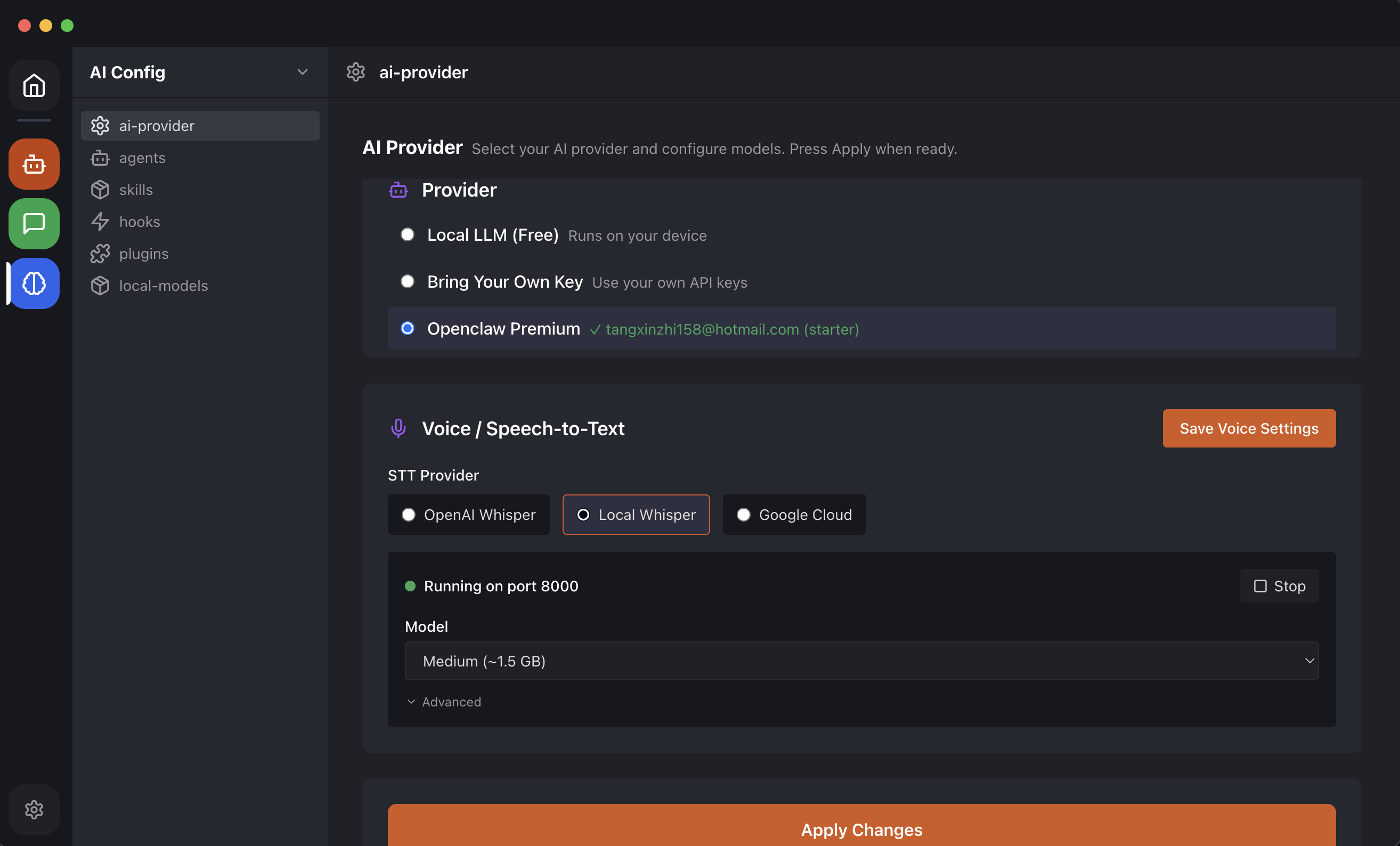

5 Configure Ollama as Your AI Provider

In OpenClaw Easy:

- Go to AI Provider in the sidebar.

- Select Local LLM (or Ollama, depending on your app version).

- Set the endpoint to http://localhost:11434 (this is the default Ollama address).

OpenClaw Easy will automatically detect which models you have available through Ollama.

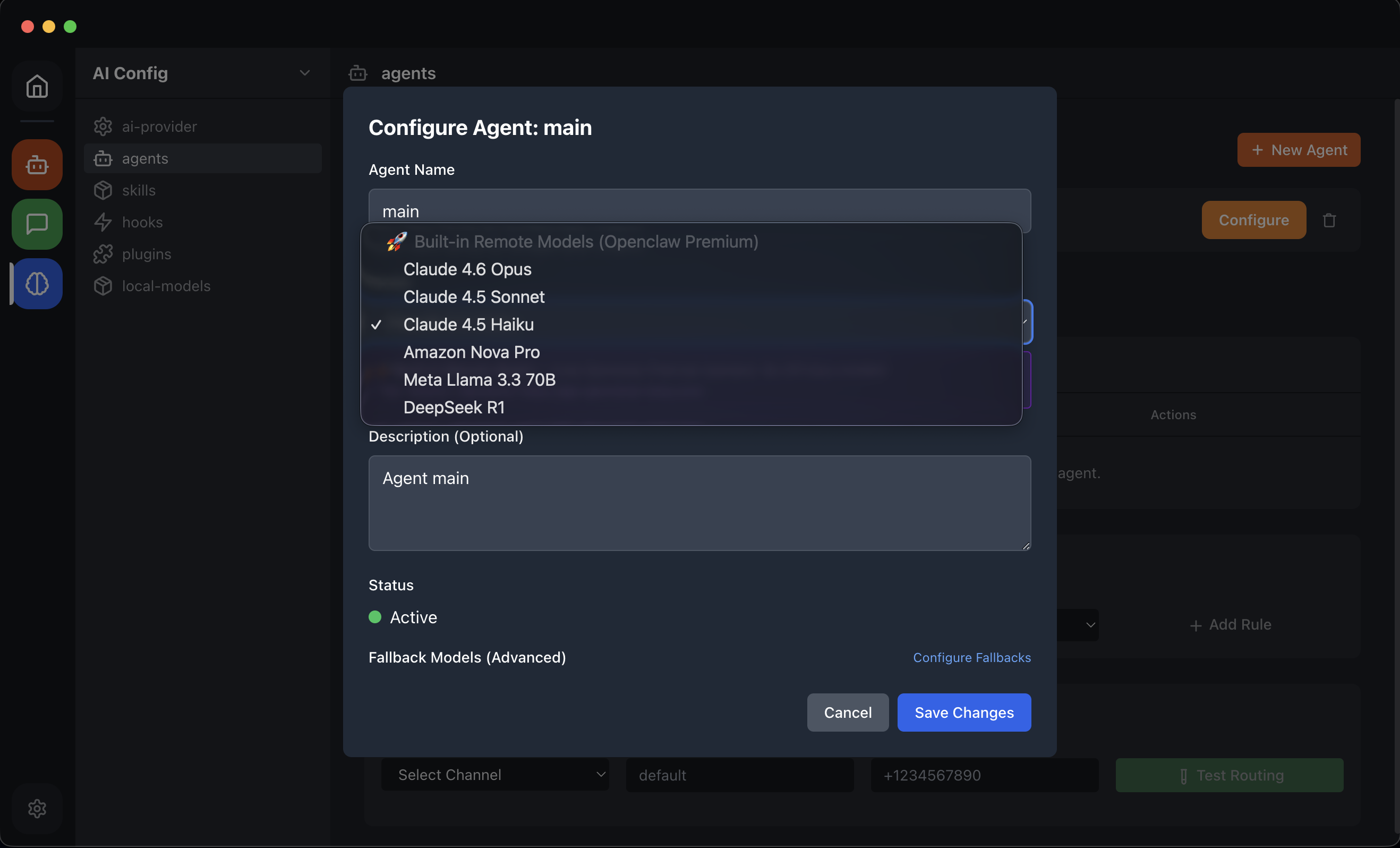

6 Select Your Model

Go to Agent Config in the sidebar. In the model dropdown, you will see all the Ollama models you have pulled. Select the one you want to use -- for example, llama3.2.

7 Connect WhatsApp

Now connect WhatsApp:

- Go to Channels in the sidebar.

- Click WhatsApp.

- Scan the QR code with your phone (WhatsApp > Settings > Linked Devices > Link a Device).

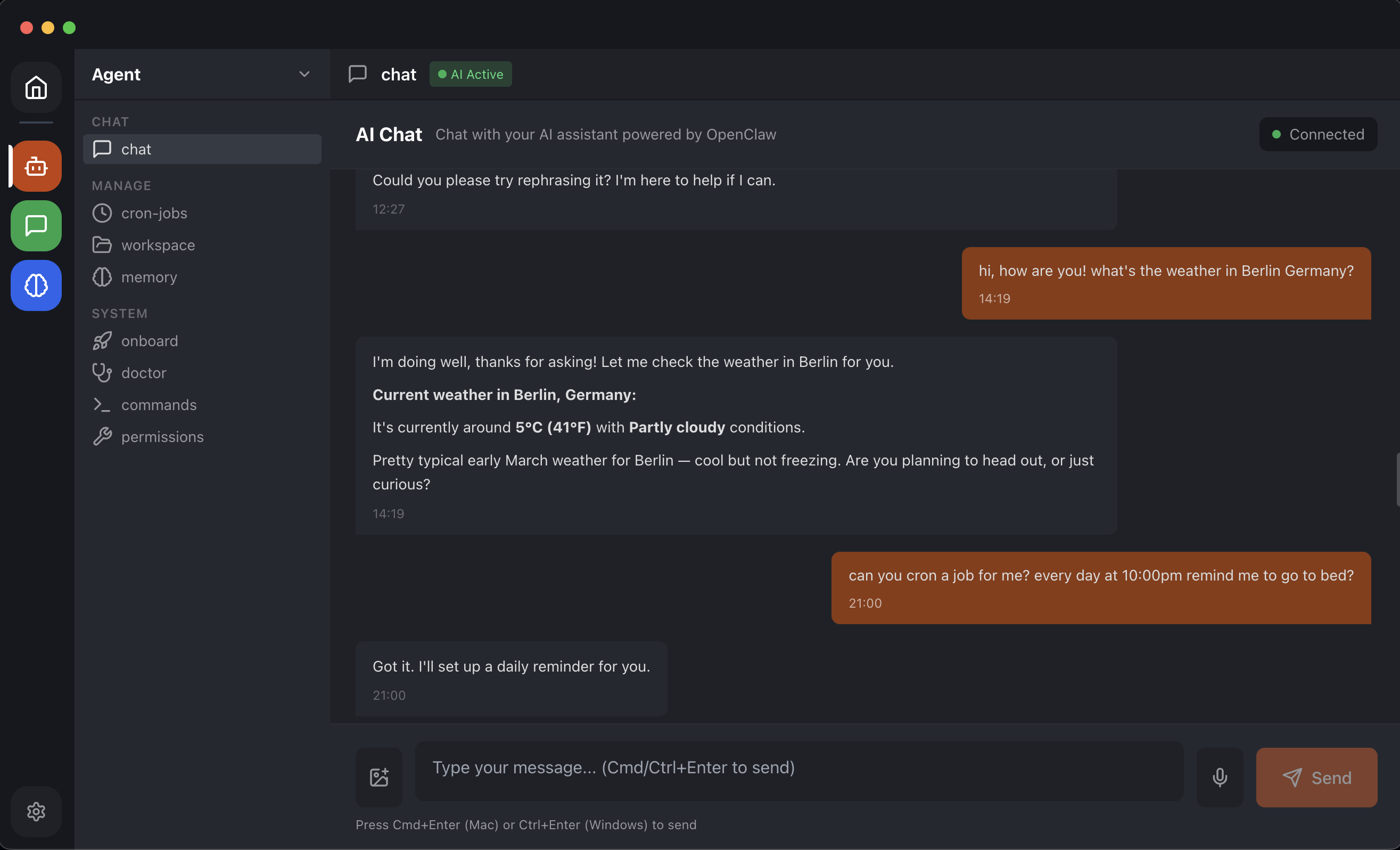

Your local LLM is now live on WhatsApp. Send a message and the AI will respond -- all processed entirely on your machine.

Choosing the Right Local Model

Not all models are equal. Here is a comparison of popular models you can run with Ollama, along with their strengths and hardware requirements:

| Model | Size | Min RAM | Best For |

|---|---|---|---|

| Llama 3.2 (8B) | 4.7 GB | 8 GB | General chat, Q&A, balanced speed/quality |

| Qwen 2.5 (7B) | 4.4 GB | 8 GB | Multilingual, coding, reasoning |

| DeepSeek-R1 (7B) | 4.7 GB | 8 GB | Reasoning, math, logic puzzles |

| Mistral (7B) | 4.1 GB | 8 GB | Fast responses, general knowledge |

| Phi-3 (3.8B) | 2.3 GB | 4 GB | Lightweight, resource-constrained machines |

| Llama 3.1 (70B) | 40 GB | 48 GB | Near-cloud quality, powerful hardware required |

Tip: If you have a Mac with Apple Silicon (M1/M2/M3/M4), you get excellent performance for local inference. The unified memory architecture means even 8 GB machines can run 7B models smoothly. On Windows, having a dedicated GPU (NVIDIA with CUDA) significantly improves speed.

Performance Tips for Local LLMs on WhatsApp

Running a local model on WhatsApp is different from using a cloud API. Here are some tips to get the best experience:

Keep Responses Short

Local models generate text slower than cloud APIs. Set a reasonable maximum response length in OpenClaw Easy's Agent Config. For WhatsApp conversations, 150-300 tokens is usually ideal -- enough for a helpful answer, fast enough to feel responsive.

Use a Smaller Model If Speed Matters

A 7B model on an M2 MacBook Air can generate about 20-30 tokens per second. That means a 100-word reply takes roughly 2-3 seconds. If that feels too slow, try a smaller model like Phi-3 (3.8B) which runs nearly twice as fast on the same hardware.

Close Heavy Applications

Local inference uses your CPU and RAM heavily. If you are running heavy applications alongside Ollama, you may notice slower responses. For best performance, keep background applications minimal while the AI bot is active.

Use Quantized Models

Ollama models are already quantized (compressed) by default, but you can choose different quantization levels. The default Q4 quantization offers a good balance. If you want higher quality and have the RAM, try Q8 variants.

Local LLM vs. Cloud API: When to Use Each

Running a local model is not always the best choice. Here is when to pick local vs. cloud:

Use a Local LLM When:

- Privacy is a hard requirement (medical, legal, financial conversations).

- You want zero ongoing costs after setup.

- You need offline capability.

- You are experimenting with open-source models.

- You have decent hardware (8GB+ RAM, ideally Apple Silicon or NVIDIA GPU).

Use a Cloud API When:

- Response quality is paramount (ChatGPT and Claude still outperform most local models).

- Speed matters -- cloud APIs respond in under a second for most queries.

- Your hardware is limited (older laptop, low RAM).

- You need the latest, largest models (100B+ parameters).

The good news is that OpenClaw Easy supports both. You can start with a local model and switch to a cloud API (or vice versa) at any time, without reconnecting WhatsApp.

Frequently Asked Questions

Can I use multiple models at the same time?

Ollama can serve multiple models, but OpenClaw Easy uses one model per agent configuration. You can switch models in the Agent Config whenever you want -- the change takes effect immediately.

Does Ollama use my GPU?

Yes, when available. On macOS with Apple Silicon, Ollama uses the unified GPU automatically. On Windows and Linux, it uses NVIDIA GPUs via CUDA. CPU-only inference works but is significantly slower for larger models.

How much disk space do I need?

It depends on the model. A single 7B model takes about 4-5 GB of disk space. You can download multiple models and switch between them. If disk space is tight, stick to one model at a time.

Can I use this with Telegram or Discord too?

Absolutely. Once Ollama is configured as your AI provider in OpenClaw Easy, it works with every channel -- WhatsApp, Telegram, Discord, Slack, and more. The AI provider is independent of the messaging channel.

What happens if my computer goes to sleep?

Both Ollama and OpenClaw Easy will pause when your computer sleeps. Messages sent during sleep will be processed when the computer wakes up. To keep your bot running 24/7, prevent your machine from sleeping (adjust power settings).

What Is Next?

You now have a fully private, local AI chatbot running on WhatsApp. Here is what to explore next:

- Try different models -- pull Qwen, DeepSeek, or Mistral and compare response quality.

- Connect more channels -- add your local AI to Telegram, Discord, or Slack.

- Schedule AI tasks -- set up cron jobs to send automated AI messages on a schedule.

- Read more about privacy -- learn about why local-first AI matters.

- Try the desktop AI app -- explore the best free AI desktop apps in 2026.

Running a local LLM on WhatsApp used to require custom code, servers, and deep technical knowledge. With Ollama and OpenClaw Easy, it takes 15 minutes. Download OpenClaw Easy for free and try it right now.