You do not need a browser tab to use AI. The best AI desktop apps let you chat with Claude, ChatGPT, Gemini, or open-source models right from your computer -- no web interface required. Some run models entirely on your hardware for full privacy. Others connect to cloud APIs for the most capable models available. And a few do something no web app can: they bring AI directly into your messaging apps like WhatsApp, Telegram, and Slack.

We compared the top 6 free AI desktop apps in 2026 to help you pick the right one. Whether you want a simple local chat, a powerful multi-model interface, or AI that works across all your messaging channels, this guide covers it.

What to Look For in an AI Desktop App

Before diving into the comparison, here are the key criteria we used to evaluate each app:

- AI model support -- does the app support cloud models (Claude, ChatGPT, Gemini), local models (via Ollama or GGUF), or both?

- Privacy -- is it local-first? Does your data stay on your machine, or does it pass through third-party servers?

- Messaging channel integration -- can you connect the AI to WhatsApp, Telegram, Slack, Discord, or other platforms?

- Price -- is the app free? Are there hidden costs or paid tiers?

- Platform support -- does it run on macOS, Windows, and Linux?

- Ease of use -- how quickly can you go from download to first conversation?

The 6 Best Free AI Desktop Apps in 2026

1. OpenClaw Easy

OpenClaw Easy is a free desktop app for macOS and Windows that combines multi-model AI chat with something no other app on this list offers: messaging channel integration. You can connect your AI to WhatsApp, Telegram, Slack, Discord, Feishu, and Line -- all from a single desktop application.

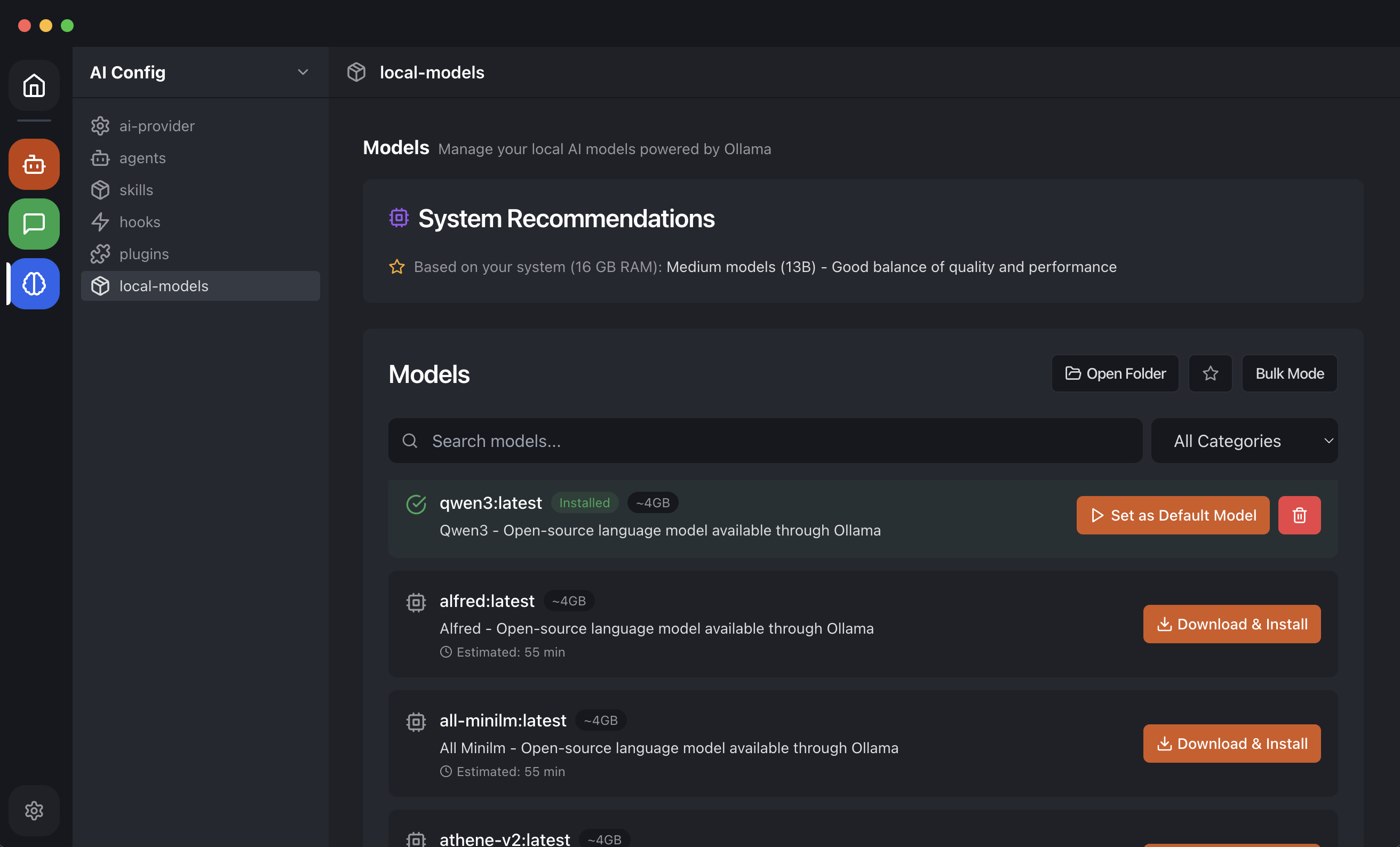

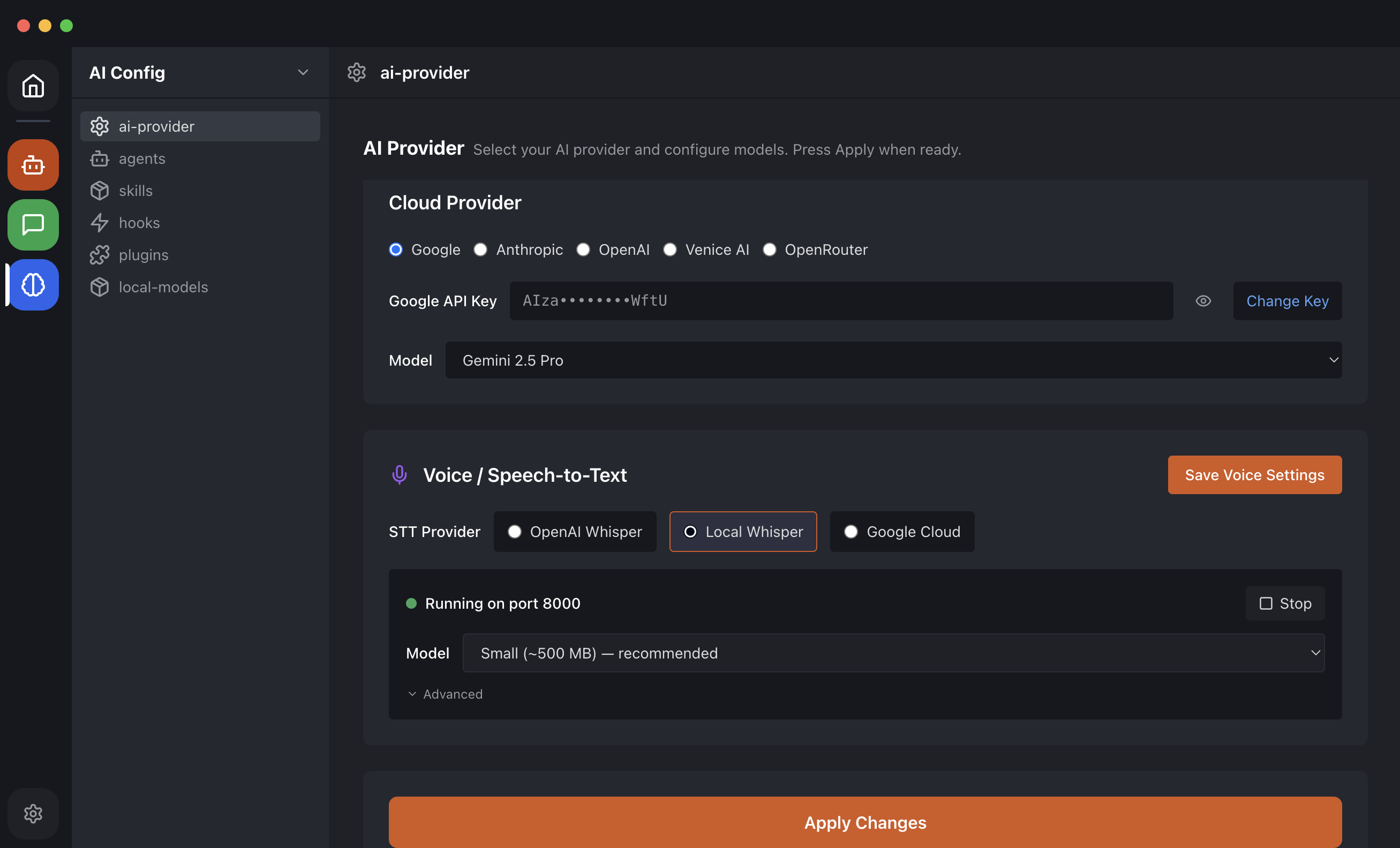

On the AI side, OpenClaw Easy supports Claude (Anthropic), ChatGPT (OpenAI), Gemini (Google), and local LLMs via Ollama. You bring your own API key for cloud models, or point the app at a local Ollama instance for fully private, offline AI. Switching between models takes one click -- no need to reconnect your channels.

The app is privacy-first by design. It runs entirely on your machine. Your API keys, conversation history, channel credentials, and configuration all stay on your hard drive. There is no intermediate cloud server. When you use Ollama, not even the AI inference leaves your computer.

Additional features include cron jobs for scheduled AI tasks (for example, sending a daily summary to a Telegram group every morning), customizable system prompts, and a built-in desktop chat for testing models before deploying them to messaging channels.

Best for: anyone who wants AI on their messaging apps AND as a desktop chat. The multi-channel support sets OpenClaw Easy apart from every other app on this list. If you use WhatsApp, Telegram, or Slack daily and want AI integrated into those conversations, this is the clear choice.

2. Jan.ai

Jan.ai is an open-source, local-first AI desktop app. It runs Llama, Mistral, Phi, and other open-source models entirely on your hardware. The interface is clean and focused: download a model, start chatting. Jan also supports OpenAI-compatible APIs if you want to connect a cloud model.

Model management is a strength. Jan makes it easy to browse, download, and switch between models. It handles quantized GGUF files well and provides clear information about model sizes and hardware requirements.

Jan does not offer messaging channel integration. It is purely a desktop chat application. There is no way to connect it to WhatsApp, Telegram, or any other messaging platform.

Available on macOS, Windows, and Linux.

Best for: users who want a simple, well-designed local AI chat with no cloud dependency. If your primary goal is running open-source models on your own hardware with a nice UI, Jan is an excellent choice.

3. LM Studio

LM Studio is a local model inference tool with a polished, developer-friendly interface. It supports GGUF models and makes model discovery effortless -- you can search, preview, and download models directly from within the app.

LM Studio also runs a local API server, which means other applications can use it as a backend for inference. For developers building AI-powered tools, this is a major plus. The chat interface is clean, and the app provides detailed performance metrics (tokens per second, memory usage) during inference.

Like Jan, LM Studio does not integrate with messaging channels. It is focused entirely on local model inference and desktop chat.

Available on macOS, Windows, and Linux.

Best for: developers and AI enthusiasts who want to experiment with many local models, compare performance, and use a local API server for their own projects.

4. GPT4All

GPT4All is a free, open-source desktop AI app designed to run on consumer hardware -- including machines without a dedicated GPU. It supports multiple model formats and can run models using only your CPU, making it accessible to users with older or lower-spec hardware.

The interface is more basic than Jan or LM Studio, but it gets the job done. GPT4All also supports document ingestion, allowing you to chat with your local files (PDFs, text documents) using retrieval-augmented generation.

No messaging channel integration. Desktop chat only.

Available on macOS, Windows, and Linux.

Best for: users with limited hardware who want local AI without GPU requirements. If you have an older laptop and still want to run a local model, GPT4All is worth trying.

5. Msty

Msty is a desktop AI app that supports both local and cloud models in a single, polished interface. You can run local models via Ollama or connect cloud providers like OpenAI, Anthropic, and Google. The conversation history is well-organized, and the UI feels modern and responsive.

Msty stands out for its multi-model comparison feature -- you can send the same prompt to multiple models and compare their responses side by side. This is useful for evaluating which model works best for specific tasks.

No messaging channel integration. Msty is a desktop-only chat application.

Available on macOS, Windows, and Linux.

Best for: users who want a polished chat UI with both local and cloud models, and who value side-by-side model comparison.

6. Ollama (CLI)

Ollama is not a desktop app in the traditional sense -- it is a local model runtime with a command-line interface. You install it, pull a model (for example, ollama pull llama3), and interact via the terminal or through its local API.

Ollama has become the standard backend for local AI inference. Many apps on this list, including OpenClaw Easy, use Ollama under the hood. Its model library is extensive, covering Llama, Mistral, Phi, Qwen, DeepSeek, Gemma, and more. Updates and new models appear quickly.

The trade-off is that Ollama is developer-focused. There is no graphical interface for chat. If you want a visual UI, you pair Ollama with a frontend app like OpenClaw Easy, Jan, or LM Studio.

Available on macOS, Windows, and Linux.

Best for: developers who prefer terminal workflows, or anyone who wants a reliable local model backend for other applications.

Quick Comparison Table

| App | Price | Local Models | Cloud Models | Messaging Channels | Platforms |

|---|---|---|---|---|---|

| OpenClaw Easy | Free (BYOK) | Yes (Ollama) | Claude, ChatGPT, Gemini | WhatsApp, Telegram, Slack, Discord, Feishu, Line | macOS, Windows |

| Jan.ai | Free | Yes (GGUF) | OpenAI-compatible | None | macOS, Windows, Linux |

| LM Studio | Free | Yes (GGUF) | No | None | macOS, Windows, Linux |

| GPT4All | Free | Yes (CPU-friendly) | No | None | macOS, Windows, Linux |

| Msty | Free | Yes (Ollama) | OpenAI, Anthropic, Google | None | macOS, Windows, Linux |

| Ollama | Free | Yes (native) | No | None | macOS, Windows, Linux |

How to Get Started with OpenClaw Easy

Getting up and running takes less than five minutes. Here is the process:

1 Download OpenClaw Easy

Head to openclaw-easy.com and download the free desktop app. Available for macOS (Intel and Apple Silicon) and Windows. Run the installer and open the app.

2 Choose Your AI

In the app, go to AI Provider settings. You have two paths:

- Cloud API key -- paste your API key from Anthropic (Claude), OpenAI (ChatGPT), or Google (Gemini). You only do this once.

- Local models -- install Ollama, pull a model, and point OpenClaw Easy to your local Ollama endpoint. Fully private, fully offline.

3 Connect a Messaging Channel

Navigate to Channels in the sidebar. Pick your platform -- WhatsApp, Telegram, Slack, Discord, Feishu, or Line -- and follow the connection flow (scan a QR code for WhatsApp, enter a bot token for Telegram, etc.). Or skip this step and just use the built-in desktop chat.

4 Start Chatting

Your AI is ready. Send a message from the desktop chat or from any connected messaging channel. The AI responds automatically. You can switch models, adjust the system prompt, and add more channels at any time.

Tip: OpenClaw Easy is the only app on this list that connects AI to your messaging apps. Other apps are great for desktop chat, but if you want AI on WhatsApp, Telegram, or Slack too, OpenClaw Easy is the clear winner.

Frequently Asked Questions

Are these apps really free?

Yes. All six apps listed here have free tiers or are entirely free. OpenClaw Easy is free with a bring-your-own-key model -- you pay only for the AI tokens you use through your API provider. Local model apps like Jan, LM Studio, GPT4All, and Ollama are completely free to use since the models run on your own hardware.

Which app has the best local AI quality?

For raw local model management and inference quality, LM Studio and Jan.ai offer the best experience. They make it easy to discover, download, and run a wide range of models with detailed performance metrics. For multi-channel utility -- running that same local model across WhatsApp, Telegram, and Slack -- OpenClaw Easy is the only option.

Can I use ChatGPT on desktop without a browser?

Yes. OpenClaw Easy and Msty both support OpenAI models via API key. You get the same model quality as ChatGPT but in a native desktop app with no browser required. OpenClaw Easy goes further by letting you use that same ChatGPT access across your messaging channels.

Which app is best for Mac?

OpenClaw Easy, Jan.ai, and LM Studio all have excellent macOS support, including Apple Silicon optimization for fast local inference on M1, M2, M3, and M4 chips. If messaging channel integration matters to you, OpenClaw Easy is the best Mac option. If you want the deepest local model tooling, LM Studio and Jan are both strong choices.

What is Next?

Want to explore further? Here are some related guides: