OpenClaw is one of the best AI assistant platforms available today — but many people assume you need a paid API key to use it. That is not true. There is a completely free path: run OpenClaw with local AI models via Ollama. No API key. No monthly bill. No per-token cost. Ever.

This guide walks you through the full setup. By the end, you will have OpenClaw running on WhatsApp, Telegram, Slack, or Discord — powered by a free local AI model like Llama 3, Qwen 2.5, or Mistral — entirely on your own machine.

Your Three Options for AI in OpenClaw

Before diving into the setup, here is a quick overview of all three ways to power OpenClaw's AI:

| Option | Cost | API Key? | Quality | Privacy |

|---|---|---|---|---|

| Local models (Ollama) | Free forever | Not needed | Very good | 100% local |

| Bring Your Own Key (BYOK) | Pay-per-use (fractions of a cent per message) | Required | Excellent | API provider sees data |

| Premium plan | Fixed monthly subscription | Not needed | Excellent | API provider sees data |

This guide focuses on the free local model path. If you decide later that you want the higher quality of Claude or GPT-4o, you can switch at any time — no reinstall required.

What You Will Need

- A computer running macOS or Windows (Linux supported too)

- At least 8 GB of RAM for smaller models (Llama 3 8B, Mistral 7B). 16 GB or more recommended for larger models.

- About 5–15 GB of disk space per model

- 10 minutes for the full setup

- No API key. No credit card. No account creation anywhere.

Apple Silicon Macs (M1/M2/M3/M4): Ollama runs exceptionally well on Apple Silicon. The unified memory architecture means even a base M1 MacBook Air handles Llama 3 8B smoothly. This is one of the best local AI setups available.

Step-by-Step: Use OpenClaw for Free

1 Download and Install OpenClaw Easy

Go to openclaw-easy.com and download the free desktop app for your operating system:

- macOS — one-click .dmg installer for Apple Silicon and Intel

- Windows — .exe installer, no WSL2 or Node.js required

Install and open the app. This takes about 30 seconds.

2 Install Ollama

Ollama is a free, open-source tool that lets you run AI models locally on your machine. Download it from ollama.ai — it is available for macOS, Windows, and Linux.

Install it like any normal application. Ollama runs as a background service on your machine once installed.

What is Ollama? Think of it as a local version of the ChatGPT API — it runs on your computer and serves AI model responses. OpenClaw Easy connects to it the same way it would connect to a cloud AI provider, except everything stays on your machine.

3 Pull a Free AI Model

Open a terminal (macOS: Terminal.app or iTerm; Windows: Command Prompt or PowerShell) and run one of the following commands to download a free model:

Llama 3 8B (recommended starting point — fast, capable, widely used):

ollama pull llama3

Qwen 2.5 7B (excellent multilingual support, strong reasoning):

ollama pull qwen2.5

Mistral 7B (fast and efficient, great for quick responses):

ollama pull mistral

DeepSeek-R1 8B (strong reasoning, competitive with paid models):

ollama pull deepseek-r1:8b

Each model download is 4–8 GB depending on the model. This is a one-time download — the model is cached locally and available instantly on every subsequent launch.

Not sure which to pick? Start with ollama pull llama3. It is the most popular local model, runs well on most hardware, and handles general conversation and questions confidently. You can always pull additional models later.

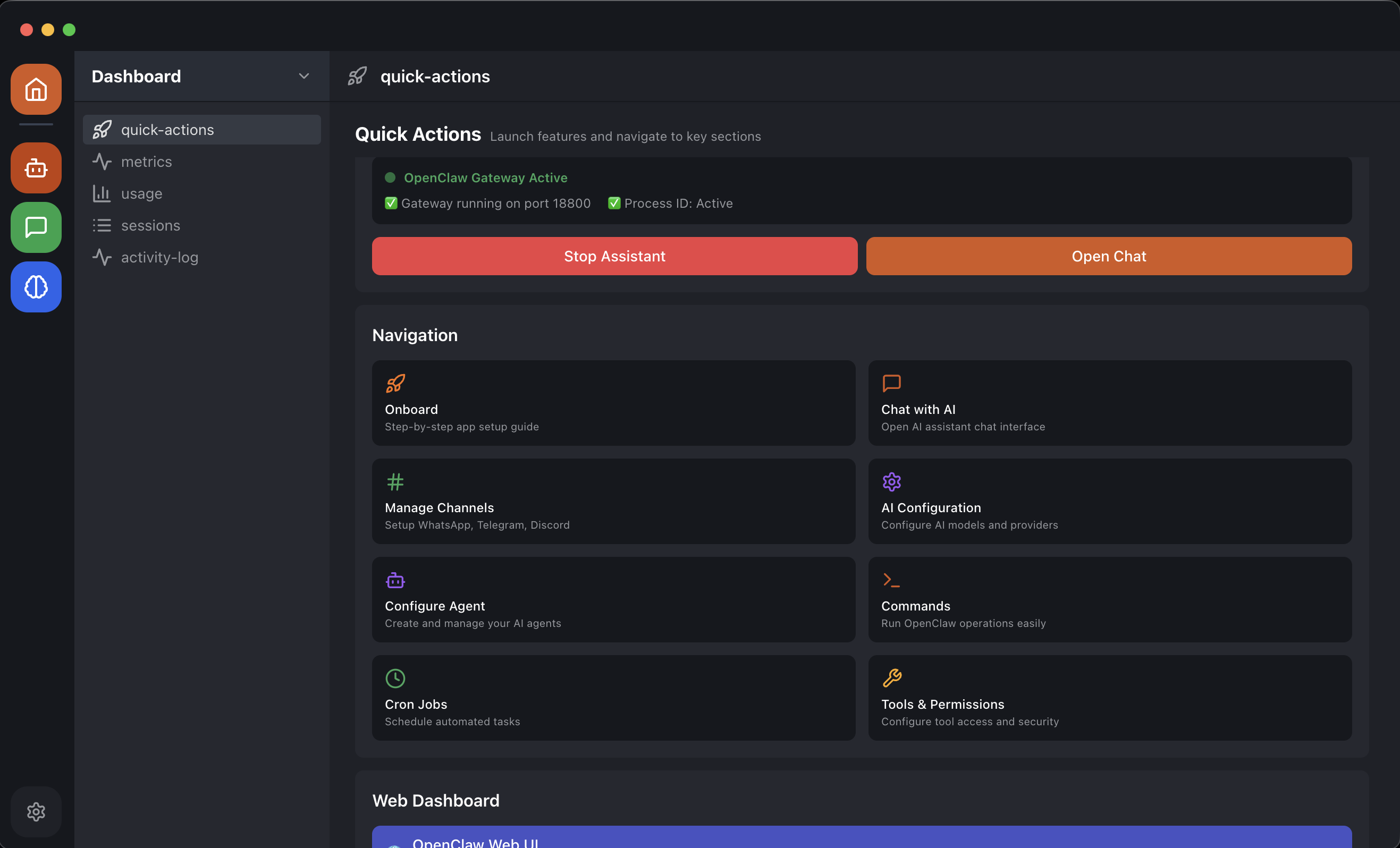

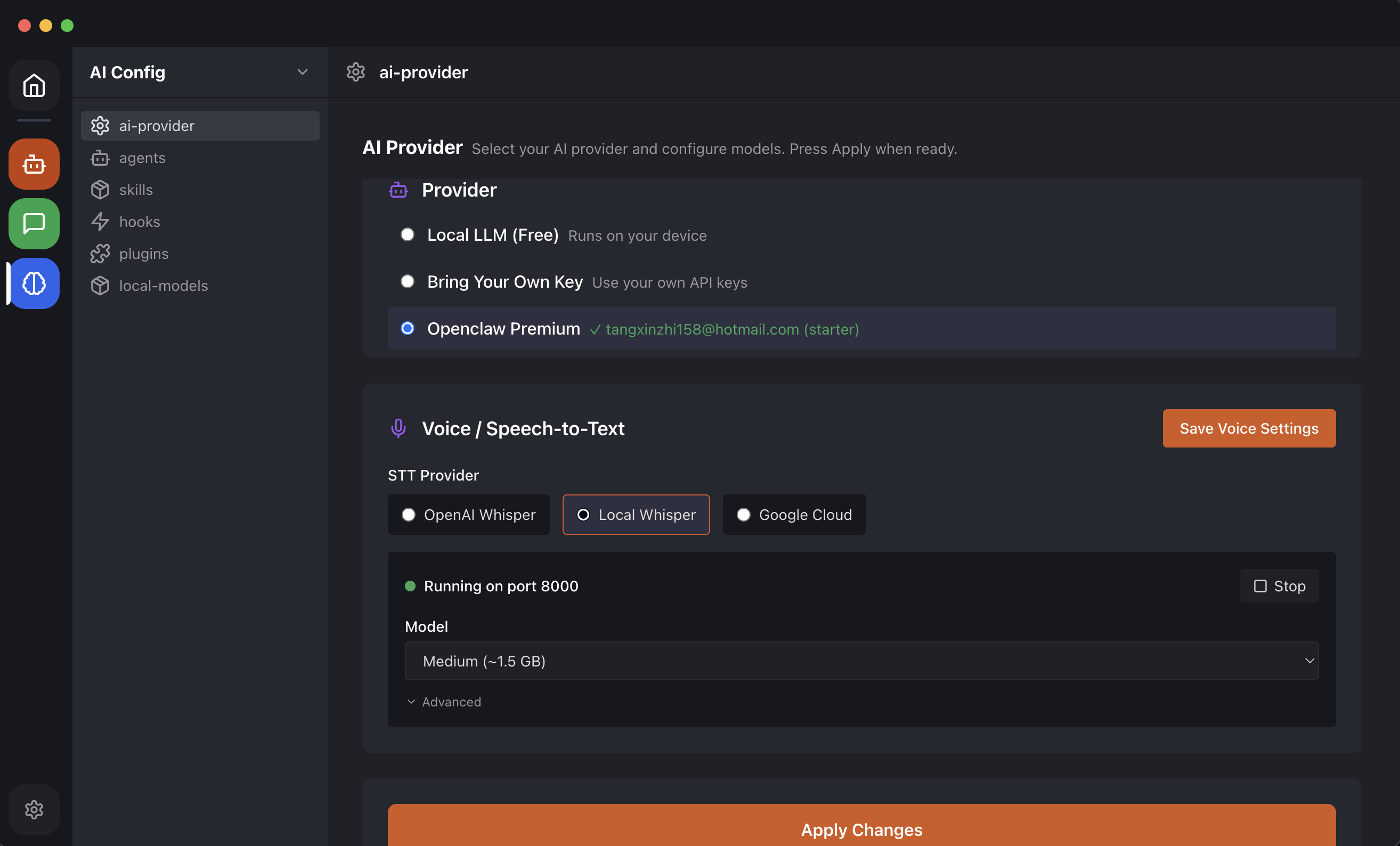

4 Connect Ollama in OpenClaw Easy

In OpenClaw Easy, go to AI Provider in the sidebar. Select Local LLM (Ollama).

OpenClaw Easy automatically detects your running Ollama instance and shows you a list of the models you have downloaded. Select the model you want to use — for example, llama3.

No API key field. No billing setup. Just select your model and you are ready.

5 Connect a Messaging Channel

Go to Channels in the sidebar. Connect your first channel:

- WhatsApp — scan the QR code with your phone

- Telegram — create a bot with @BotFather and paste the token

- Slack — authenticate via OAuth

- Discord — paste your bot token from the Developer Portal

Your free AI assistant is now live. Every message your contacts send gets answered by the local model running on your machine — at zero cost per message, forever.

The Best Free Models for OpenClaw (2026)

Here is a breakdown of the best free local models to use with OpenClaw, ranked by use case:

Llama 3 8B — Best All-Rounder

Meta's Llama 3 is the gold standard for free local AI. The 8B parameter version runs smoothly on most machines with 8 GB of RAM and delivers quality that rivals GPT-3.5 on most conversational tasks. If you only pull one model, make it this one.

ollama pull llama3

Qwen 2.5 7B — Best for Multilingual

Alibaba's Qwen 2.5 has outstanding multilingual capabilities — especially strong in Chinese, Japanese, Korean, and other Asian languages. If your WhatsApp or Telegram contacts use multiple languages, Qwen handles code-switching naturally.

ollama pull qwen2.5

DeepSeek-R1 — Best Reasoning

DeepSeek-R1 uses chain-of-thought reasoning and delivers surprisingly strong performance on complex questions, math, and analysis tasks. The 8B version runs on consumer hardware. A compelling free alternative to paid reasoning models.

ollama pull deepseek-r1:8b

Mistral 7B — Fastest Responses

Mistral 7B is lean and fast. If your priority is low response latency — important for a messaging bot where users expect quick replies — Mistral is the right choice. It handles everyday questions and tasks well at high speed.

ollama pull mistral

Llama 3 70B — Highest Quality (Requires Strong Hardware)

If you have 32 GB or more of RAM (or a high-end GPU), the 70B version of Llama 3 delivers quality that genuinely competes with Claude Sonnet and GPT-4o — completely free. This is the option for power users who want maximum quality at zero cost.

ollama pull llama3:70b

How the Cost Compares

To put the free local model path in perspective, here is what a typical day of personal AI assistant use costs across different setups:

| Setup | Cost per day | Cost per month |

|---|---|---|

| OpenClaw Easy + Ollama (local model) | $0.00 | $0.00 |

| OpenClaw Easy + Claude API (BYOK, ~100 messages) | ~$0.05–$0.20 | ~$1.50–$6 |

| OpenClaw Easy + GPT-4o mini (BYOK, ~100 messages) | ~$0.02–$0.10 | ~$0.60–$3 |

| ChatGPT Plus subscription | ~$0.67 | $20.00 |

The local model path is genuinely $0 after the initial model download. Your only cost is the electricity your computer uses — which is negligible.

Limitations of Free Local Models

To be honest about the trade-offs — free local models are excellent, but there are a few things to keep in mind:

- Your computer must be on — the AI only works when OpenClaw Easy is running. If you close your laptop, the bot goes offline.

- Response speed depends on your hardware — on older or lower-spec machines, larger models may respond slowly. Mistral 7B or Llama 3 8B are optimized for speed on consumer hardware.

- No internet knowledge cutoff advantage — local models do not browse the web. They answer from their training data. If you need real-time information, consider using a BYOK API key instead.

- Smaller models have limits — the 7B–8B models are impressive but not as capable as the largest cloud models on complex reasoning tasks. For most personal assistant and customer support use cases, the quality is more than sufficient.

Best of both worlds: Start with a local model for free. If you find the quality limiting for a specific task, switch to a BYOK API key in OpenClaw Easy with one click — no reinstall, no reconfiguration of channels.

Privacy: Why Local Models Are the Gold Standard

Beyond the cost savings, running local models has a major privacy advantage: your conversations never leave your machine.

When you use a cloud AI provider (Claude, ChatGPT, Gemini), your messages travel to Anthropic's, OpenAI's, or Google's servers for processing. These companies have privacy policies and data handling practices you have to trust.

With Ollama and a local model, the full AI inference loop happens on your hardware:

- Your contact sends a message to your WhatsApp or Telegram bot

- OpenClaw Easy receives it on your machine

- The message is sent to Ollama running locally

- The AI generates a response on your CPU/GPU

- The response goes back to your contact

At no point does your message content touch any external AI server. For personal use, business customer support, or any situation involving sensitive information, this is a meaningful advantage.

Frequently Asked Questions

Can I really use OpenClaw with no API key at all?

Yes. With Ollama and a local model, OpenClaw Easy has no need for any API key. The AI runs entirely on your machine. There is no account to create, no key to manage, and no bill to pay.

Does Ollama work on Windows?

Yes. Ollama has a native Windows installer. Download it from ollama.ai, install it, and it works alongside OpenClaw Easy on Windows without any additional setup.

How much RAM do I need?

For 7B–8B parameter models (Llama 3 8B, Mistral 7B, Qwen 2.5 7B), you need at least 8 GB of RAM. 16 GB gives comfortable headroom. For the 70B models, 32 GB or more is recommended.

Can I switch between a local model and a paid API?

Yes. In OpenClaw Easy, switching AI providers takes a few seconds in the AI Provider settings. You can use a local model by default and switch to Claude or GPT-4o for specific conversations where you need higher quality — without changing any channel configuration.

Will the free local model work for a business WhatsApp chatbot?

For most FAQ-style customer support, Llama 3 8B or Qwen 2.5 7B performs well. If your customers ask highly complex questions or you need nuanced, brand-voice responses, a BYOK API key with Claude or GPT-4o may serve better. You can test both and decide.

What happened to the free tier that was previously available?

The local model path has always been free — it is not a "tier" but a fundamental capability. Ollama is open source and free. The models are open weights and free. OpenClaw Easy is free to download. The combination has zero ongoing cost.

What Is Next?

You are now running OpenClaw for free with a local AI model. Here are some things to explore:

- Connect WhatsApp — read the full WhatsApp AI setup guide to get your bot live in 5 minutes.

- Set up Telegram — follow the Telegram bot guide for a step-by-step walkthrough.

- Go deeper on local LLMs — see the complete Ollama + WhatsApp guide for advanced configuration.

- Schedule automated messages — use cron jobs to send daily AI-powered messages at set times.

- Install on Windows — if you are on Windows, check the Windows install guide for platform-specific tips.

OpenClaw with local models is one of the most powerful free AI setups available anywhere. Your data stays private, your costs stay at zero, and the quality of models like Llama 3 and DeepSeek-R1 is genuinely impressive. Download OpenClaw Easy and get started today — no API key needed.